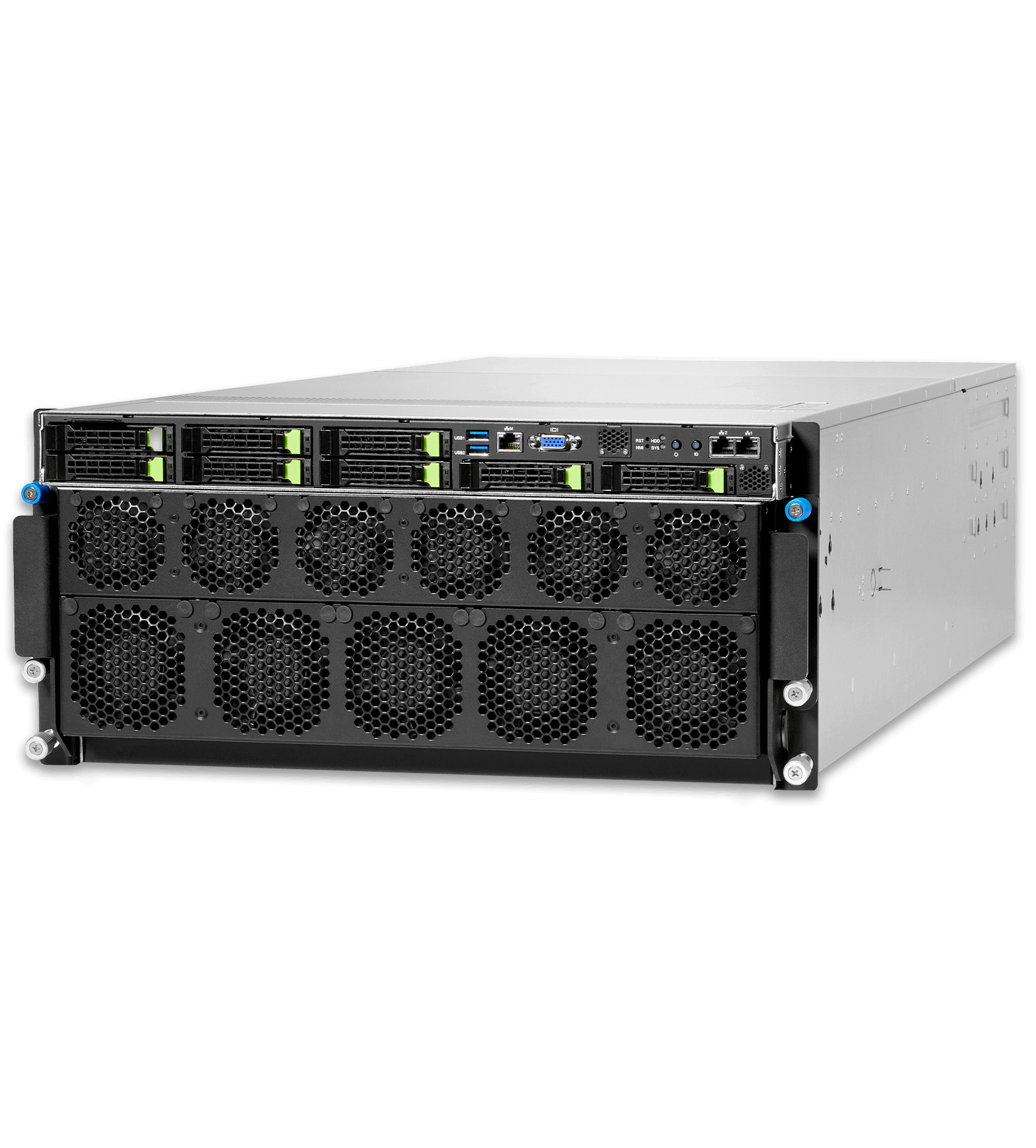

HPE Cray XD670

Accelerate AI performance for Large Language Model training, Natural Language Processing, and multimodal training.

Boost AI performance for GPU-intensive workloads

Develop and train larger AI models faster and accelerate time-to-results with unprecedented efficiency, precision, and detailed insights.

Scale to accommodate growing AI workloads

Leverage breakthrough innovation with fully integrated solutions from the hardware to the software layer to take on workloads of any size and scope.

Accelerate AI initiatives

Deploy AI at scale with a solution optimized for workloads in Natural Language Processing (NLP), Large Language Model (LLM) training, and multimodal training.

Meet evolving business demands with optimum flexibility

Improve power efficiency with a direct liquid cooling option and create a flexible environment with a broad range of supported technologies including accelerators, storage and networking.

Boost AI performance with a top-scoring server in recent MLPerf® benchmarks

HPE Cray XD670 delivered six #1 results in the MLPerf® Inference v5.1 tests including in computer vision and LLMs chat Q&A and text generation.

Learn more about the GreenLake unified platform experience

GreenLake is the cloud delivering a unified platform experience that allows enterprises to simplify IT, reduce costs and transform faster. See how GreenLake can help your enterprise streamline IT operations and optimize your entire hybrid environment, unify and secure data, and accelerate AI from pilot to production.