AIOps What is AIOps?

AIOps, or artificial intelligence for IT operations, refers to the use of artificial intelligence, such as machine learning (ML), generative AI (GenAI), and agentic AI, to automate the identification and resolution of common IT issues or to improve operational efficiency.

Within the networking space, AIOps automates manually intensive tasks to simplify and streamline operations across complex wired, wireless, campus, branch, WAN, data center, and cloud networks. It uses high-quality data, intelligent analysis and contextual understanding to optimize network operations and, when authorized, heal itself autonomously. This allows operations teams to shift their focus toward strategic initiatives that deliver greater value.

Agentic AI is reshaping AIOps, by accelerating the shift towards autonomous, self-driving networks. Through predictive analytics and actionable insights, it supports smarter, more scalable network operations to enhance user experiences. For example, AIOps insights can detect a non-compliant wireless access point or switch, and if authorized, initiate a software upgrade without human intervention.

Time to read: 7 minutes, 5 seconds | Published: October 16, 2025

Table of Contents

Why is AIOps important?

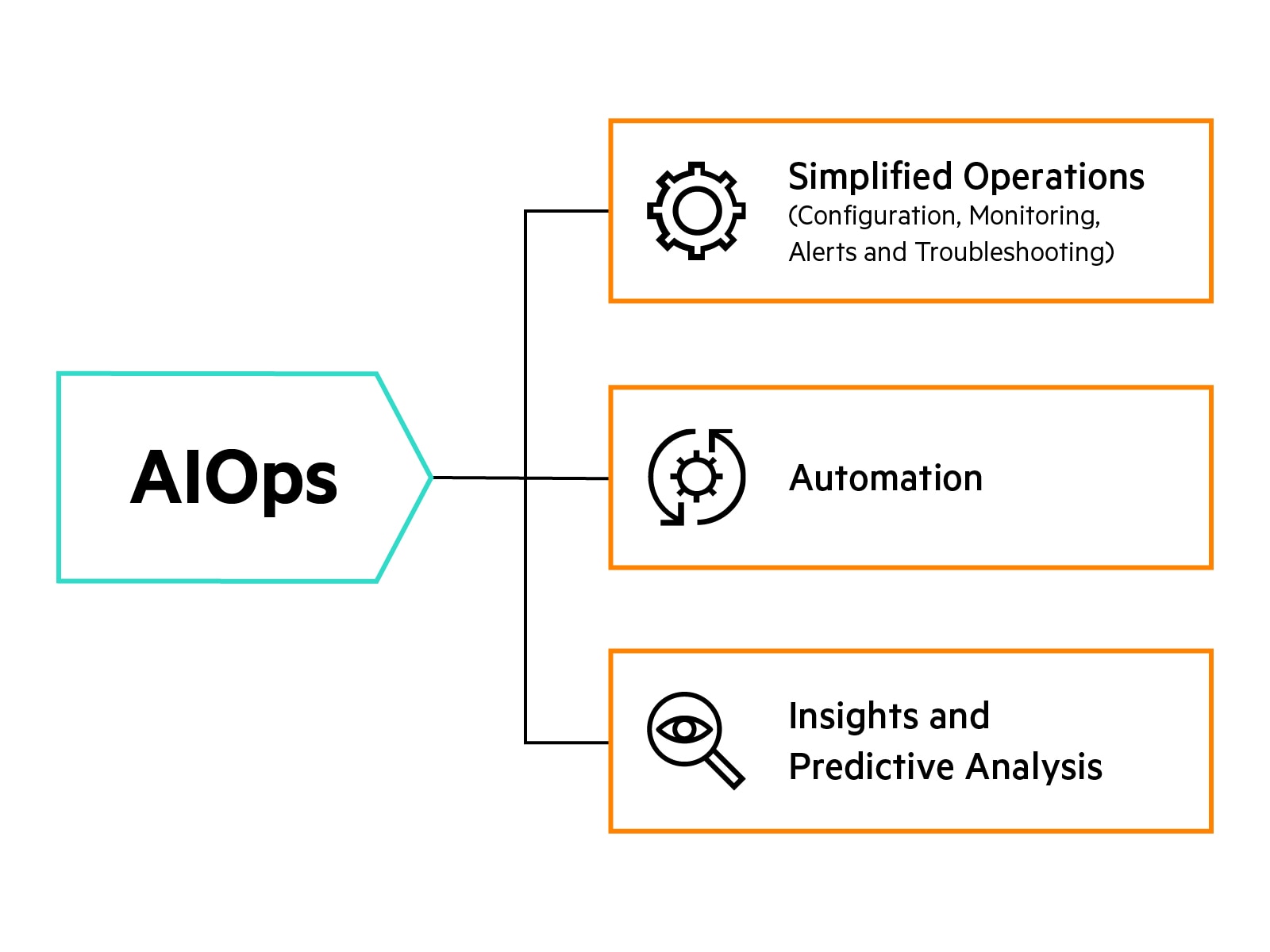

AIOps detects issues affecting performance, security, or user experiences, and responds with recommendations or autonomous fixes. It automates complex workflows, accelerating efficiency and minimizing human-induced delays.

AIOps empowers IT teams with predictive analytics, anomaly detection, and event correlation. With these capabilities, operators can proactively identify and resolve issues before they impact user experiences, application performance, or system availability, delivering seamless experiences with advanced intelligence and automation.

Beyond automating complex workflows and reducing manual effort, its real strength lies in its ability to scale across diverse environments, from campus networks to cloud infrastructure, adapting to evolving business needs. This adaptability, combined with real-time intelligence, drives greater efficiency across the organization.

How does AIOps work?

AIOps works by ingesting and consolidating billions of data points from diverse sources, (applications, logs, events, alerts, patterns, and more). It then processes that data via machine learning or deep learning (DL) algorithms with agentic AI orchestration to deliver real-time insights, such as quality of experience (QoE), root cause analysis, and anomaly detection.

AIOps continuously scans for patterns, correlations, and anomalies that may indicate emerging issues or performance deviations. It applies techniques like clustering, classification, and predictive analytics to automatically group related events, filter out noise, and identify root causes.

AIOps platforms often leverage natural language processing (NLP) to interpret unstructured data (like incident tickets or chat messages) and use automation engines to trigger remediation workflows or alert IT teams.

Good AIOps will reduce false positives, eliminating alarm fatigue so operators can proactively detect issues and resolve them before they impact end user experiences.

How does AIOps deliver insights in an enterprise network environment?

AIOps uses telemetry collected from networks, client devices, and applications to create baselines that automatically help identify issues, determine root causes, and deliver optimization guidance in real time.

AIOps can include the use of the following AI techniques:

- Classification AI (including machine learning): Algorithms with the ability to learn about and adapt to changes in the environment. They can change or create new algorithms to identify problems earlier and recommend effective solutions.

- Generative AI (GenAI): AI capable of generating text, images, video, or other data using generative models, often in response to prompts. Generative AI models, including large language models (LLMs) learn the patterns and structure of their input training data and then generate new data that has similar characteristics. An example of GenAI that uses LLMs is OpenAI’s ChatGPT.

- Agentic AI: Agentic AI leverages intelligent, self-learning agents that can reason, collaborate, and act across domains. These agents function as domain experts, breaking down complex problems into manageable subtasks, that are delegated and resolved autonomously.

What are the networking use cases for AIOps?

AIOps helps address many of the most common challenges IT teams face today when it comes to operating their networks. These include:

- Maintaining network configuration compliance: Static device settings do not keep up with changing business needs. AIOps continuously monitors network operations and recommends or automatically makes optimization changes.

- Addressing changing business needs: Manually configuring Service Level Expectations (SLEs) is costly and time-consuming. With AIOps, important network thresholds are automatically defined, monitored, and adjusted based on environmental changes.

- Resolving network issues quickly: In most IT organizations, help desk calls are the primary form of identifying problems, which is expensive and inefficient. The preemptive insights provided by AIOps help identify issues before they impact users or IoT devices for a reduction in help desk calls.

- Replicating intermittent issues: Many IT teams spend hours or days tracking down intermittent problems because they are difficult to replicate. Automated, always-on monitoring via AIOps pinpoints persistent versus obvious problems, with built-in data capture.

- Increasing network complexity: Troubleshooting and optimization tasks consume over 50% of IT’s time. AIOps solves this challenge by providing key insights such as the reasons for failures, root cause analysis, and repair recommendations.

- Lacking resources and skills: Lack of resources and training are a constant point of contention in many IT organizations. AIOps-driven insights, such as GenAI-powered search features, are designed to assist and enhance the team’s knowledge base.

Benefits of AIOps for networking

- Faster troubleshooting and resolution: AIOps automates root cause analysis and incident correlation, reducing manual troubleshooting and mean time to resolution.

- Proactive issue detection: AIOps identifies anomalies and potential problems before they impact users, allowing admins to address issues proactively and reduce trouble tickets.

- Optimized network performance: AIOps analyzes traffic patterns and configuration data, providing actionable recommendations that can increase network utilization, optimize bandwidth and enable IT teams to focus on higher-value strategic initiatives.

- Reduced alert noise: Machine learning filters out irrelevant alerts and false positives, enabling admins to focus on genuine issues and improve operational efficiency.

- Automated remediation and enhanced user experience: AIOps automates routine tasks and workflows, minimizing downtime and outages. This allows IT teams to maintain higher service availability and meet network uptime requirements without increasing operational overhead.

AIOps for networking

AIOps for networking, or AI for networking, is an approach to networking that integrates security, digital experience monitoring, and support for Zero Trust architectures, ensuring networks are efficient and resilient against evolving threats.

Networks must have universal connectivity, constant uptime, high speed, and low latency, all while being secure and reliable. With innovations like GenAI search, agentic mesh, and autonomous remediation, organizations can set a new standard for intelligent networking.

Using the power of AI in a networking platform to help you optimize and manage your network is like having a team of experts with you at the poker table. Harnessing the power of AIOps helps you run your network seamlessly.

Easing concerns over AI adoption

Organizations have an exciting opportunity to elevate their network operations by embracing AIOps. Adopting new technology opens the door to greater efficiency, smarter decision-making and improved service quality. Here are some important aspects to consider as you explore AI adoption for your organization:

- Security and ethics: Determine what data the AI engine is using and how that data is secured. Ensure that the vendor follows ethical AI principals and guidelines.

- Integration: An effective AIOps solution should streamline operations rather than add complexity. Look for a solution that can integrate with existing infrastructure or is built into the IT solution.

- Efficacy: Evaluate how AIOps has evolved over time, beginning with the point of its initial integration. An effective AIOps system should deliver accurate, real-time insights and proactively alert operators to high-priority issues, without contributing to alert fatigue. Its performance should continuously improve through a closed-loop cycle of feedback and development.

- Real-world examples: Look for situations where the AIOps solution has provided real results for customers.

HPE and AIOps

Maintaining a network today demands constant visibility and intelligent automation. HPE Networking delivers a secure, AI-native network with AIOps to realize the vision of a self-driving network; one that helps IT teams optimize network operations and heals itself autonomously.

HPE Networking with AIOps can help:

- Identify network, security and application performance issues faster with minimal or no human input before they affect users and businesses.

- Predict bad user experiences using collaborative application data (e.g., Zoom, Teams) and unstructured application data to pinpoint root causes and mitigate issues with self-driving autonomy, helping reduce downtime, improve service quality, and enhance user satisfaction client to cloud.

- Eliminate manual troubleshooting, which helps cut costs, resolve issues faster, and boost IT productivity, improving overall productivity by allowing teams to focus on strategic initiatives instead of routine busywork.

- Optimize the network by providing practical recommendations in response to network changes, such as onboarding IoT devices, scaling WAN capacity, or correcting misconfigured VXLANs, to maintain performance, reduce configuration errors, and support efficient scaling.

- Deliver instant answers, configuration guidance, and troubleshooting tips through a agentic-powered search interface that acts as a customer-facing AI assistant, enabling faster issue resolution, shorter support wait times, and improved self-service capabilities with expert-level assistance.

- Leverage terabytes of data from thousands of global installations and network devices, combined with deep networking and security expertise and a strong team of data scientists (who validate our data lake), to enable faster issue detection, smarter decision-making, and shorter resolution times.

AIOps FAQs

What problems does AIOps solve?

AIOps analyzes and consolidates data from multiple sources. It observes and learns details from the environment and provides assessments based on overall quality of experience (QoE). In this way, AIOps can correlate network activities to determine and resolve problems before they’re noticed by end users or IT operations staff.

AIOps provides root cause analyses of problems as or before they occur based on ML algorithms and contextualized data. Above all, AIOps democratizes the ability to troubleshoot among IT personnel with different levels of expertise, increasing overall operations efficiency across the team.

What are the components of AIOps?

An AIOps platform uses ML and GenAI algorithms and contextualized data to provide root cause analyses and automatically remediate simple problems in the network. AIOps requires an AI engine able to correlate events and AI algorithms that extract knowledge or patterns from a set of observations. A virtual network assistant using natural language processing (NLP) enhanced by natural language understanding (NLU) and language generation (LG) offers a powerful conversational interface that can contextualize requests, accelerate troubleshooting, and make intelligent decisions or recommendations to streamline operations.

What are the key capabilities of AIOps?

- Problem isolation/root cause analysis: With the large volume of data in today’s networks, it’s difficult to pinpoint problems raised in trouble tickets, much less those that haven’t been brought to the attention of IT. AIOps correlates events in real time by processing contextualized data, allowing operations teams to identify and rectify issues in a timely manner.

- Data-driven decision-making: AI algorithms drive data-based analysis, which offers operational recommendations or remediations rather than predetermined responses to networking faults or anomalies. This data-centric approach improves operations staffs’ troubleshooting efficiency.

- Predictive reporting: AIOps predicts network behavior and offers recommendations or remediations for fixing degraded performance and other anomalies within the network. This fundamental shift benefits operations teams by allowing them to be proactive in managing network operations, rather than chasing down issues that have already had an impact on users and the business. As a result, IT frees up time once spent in firefighting mode to tackle future business objectives.